In this analysis we will explore upgrade rates in New Publish and Buffer Classic. We will aim to better understand these upgrade rates and why they might differ.

Conclusions

Controlling for things like trial starts, mobile signups, and Business upgrades shows us that the upgrade rates of New Publish and Buffer Classic are close, but not quite equal. One potentially significant difference between the two apps is that more Buffer Classic users upgrade at a later date (for example, approximately 24% of upgrades in Buffer Classic occur after day 15, compared to only 18% of upgrades in New Publish). That may be due to more re-engagement initiatives in Buffer Classic, the availability of trials, or another retention loop that I am not aware of.

I might look towards achieving parity in retention rates in the hope that upgrade rates would follow.

Data Collection

We will utilize the user_upgrade_facts table, which contains information on whether or not a new user upgraded, and which plan they may have upgraded to. Users that have access to New Publish will be in the enabled group of the experiment named new_publish_new_buffer_free_users_phase_x, and users that have access to Buffer classic will be in the control group.

We will gather all users that have been added to this experiment and analyze their data that is in the user_upgrade_facts table. We will exclude users that signed up with a trial start CTA, as trialists are automatically put into Buffer Classic.

select

u.id as user_id

, date(u.created_at) as signup_date

, ue.name as experiment

, ue."group" as ex_group

, date(uf.first_charge_at) as upgrade_date

, uf.first_charge_plan_id as first_plan_id

, uf.first_charge_simplified_plan_id as first_simplified_plan

, uf.did_user_upgrade

, uf.did_user_upgrade_to_awesome

, uf.did_user_upgrade_to_business

, sa.id

, count(distinct st.stripe_event_id) as number_of_trials

from dbt.users as u

inner join dbt.user_experiments as ue

on u.id = ue.user_id

and ue.name like 'new_publish_new_buffer_free_users_phase%'

left join dbt.user_upgrade_facts as uf

on u.id = uf.user_id

left join dbt.stripe_trials as st

on u.id = st.user_id

left join dbt.signup_attributions as sa

on u.id = sa.user_id

where (st.seconds_to_trial_start is null or st.seconds_to_trial_start >= 60)

group by 1,2,3,4,5,6,7,8,9,10,11This query returns 315 thousand users that have signed up for Buffer since August 23, 2018.

Calculating Upgrade Rates

To calculate this metric, we’ll divide the number of users that signed up in a given week and upgraded by the total number of users that signed up that week. We’ll segment this metric by their experiment group, control or enabled. There are a couple of factors that we need to control for. The biggest one is a bug in New Publish that prevented users from upgrading until the end of September. To control for this, we will only include users that signed up since October 1.

## `summarise()` regrouping output by 'signup_week', 'ex_group' (override with `.groups` argument)

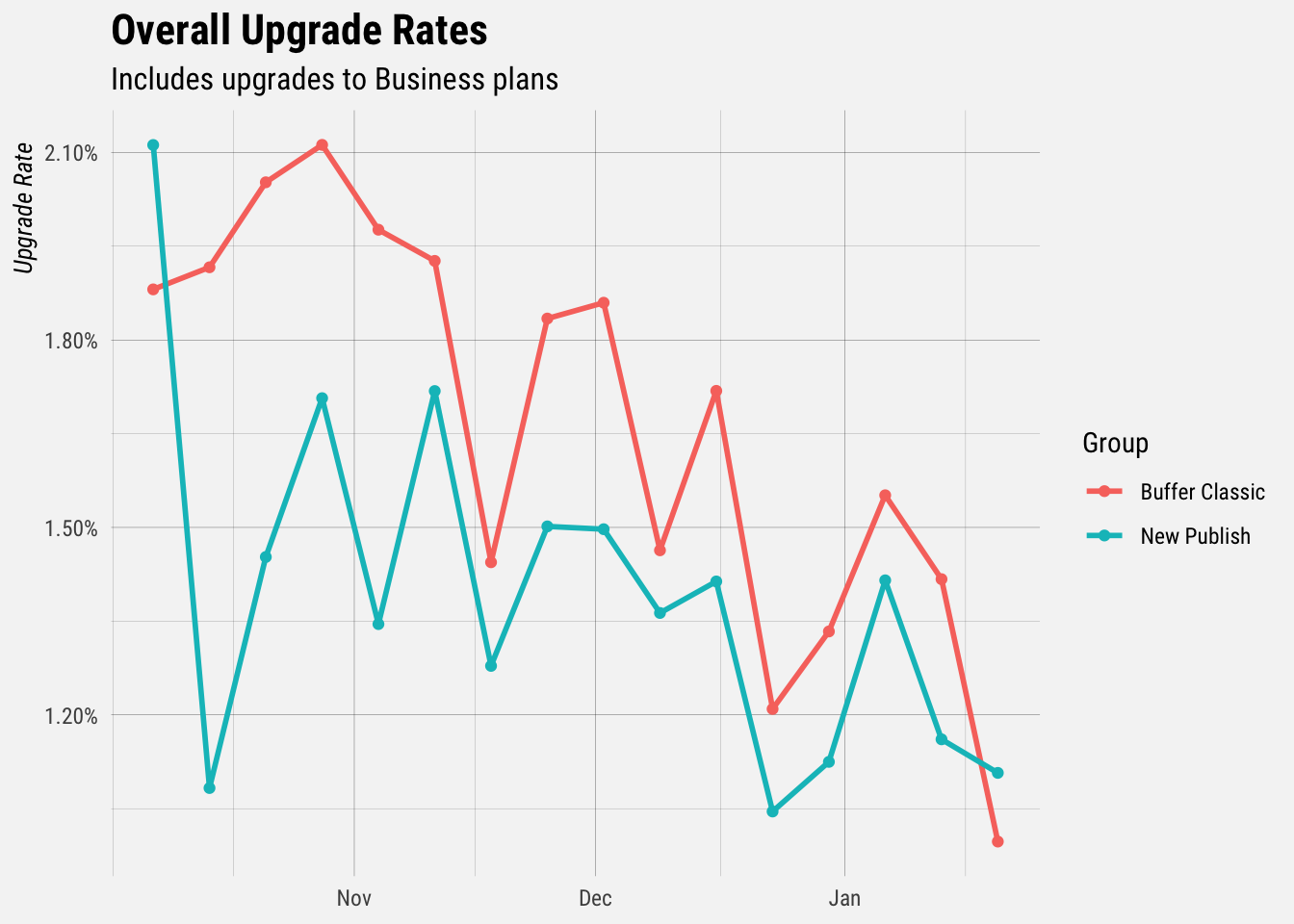

As we can see, upgrade rates in Buffer Classic have been consistently higher than upgrade rates in New Publish. The overall upgrade rates decline because users that have signed up most recently have had the least amount of time to upgrade.

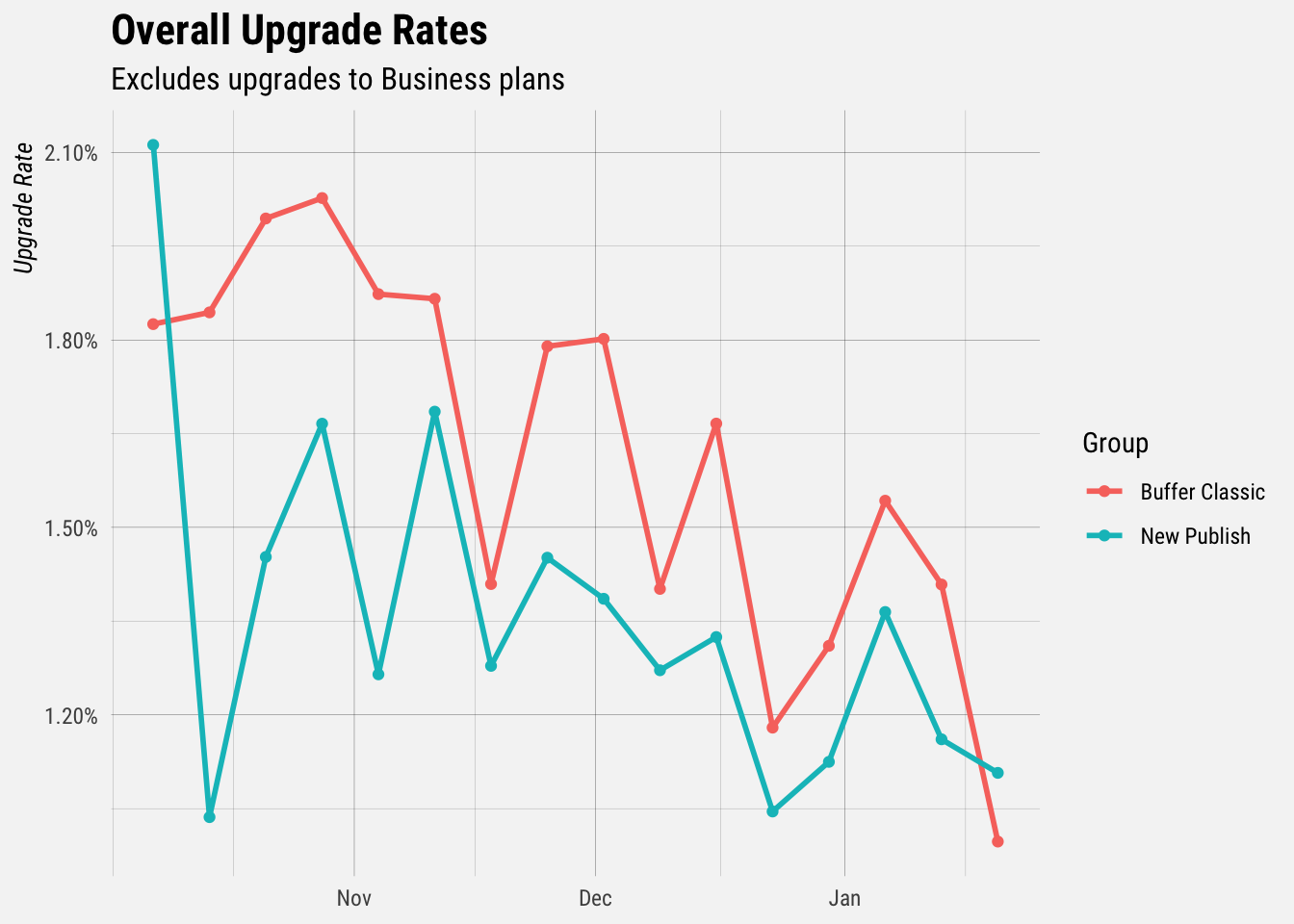

One important thing to remember is that New Publish users cannot upgrade to Business plans. Let’s exclude all users that upgrade to Business plans and recreate the plot.

## `summarise()` regrouping output by 'signup_week', 'ex_group' (override with `.groups` argument)

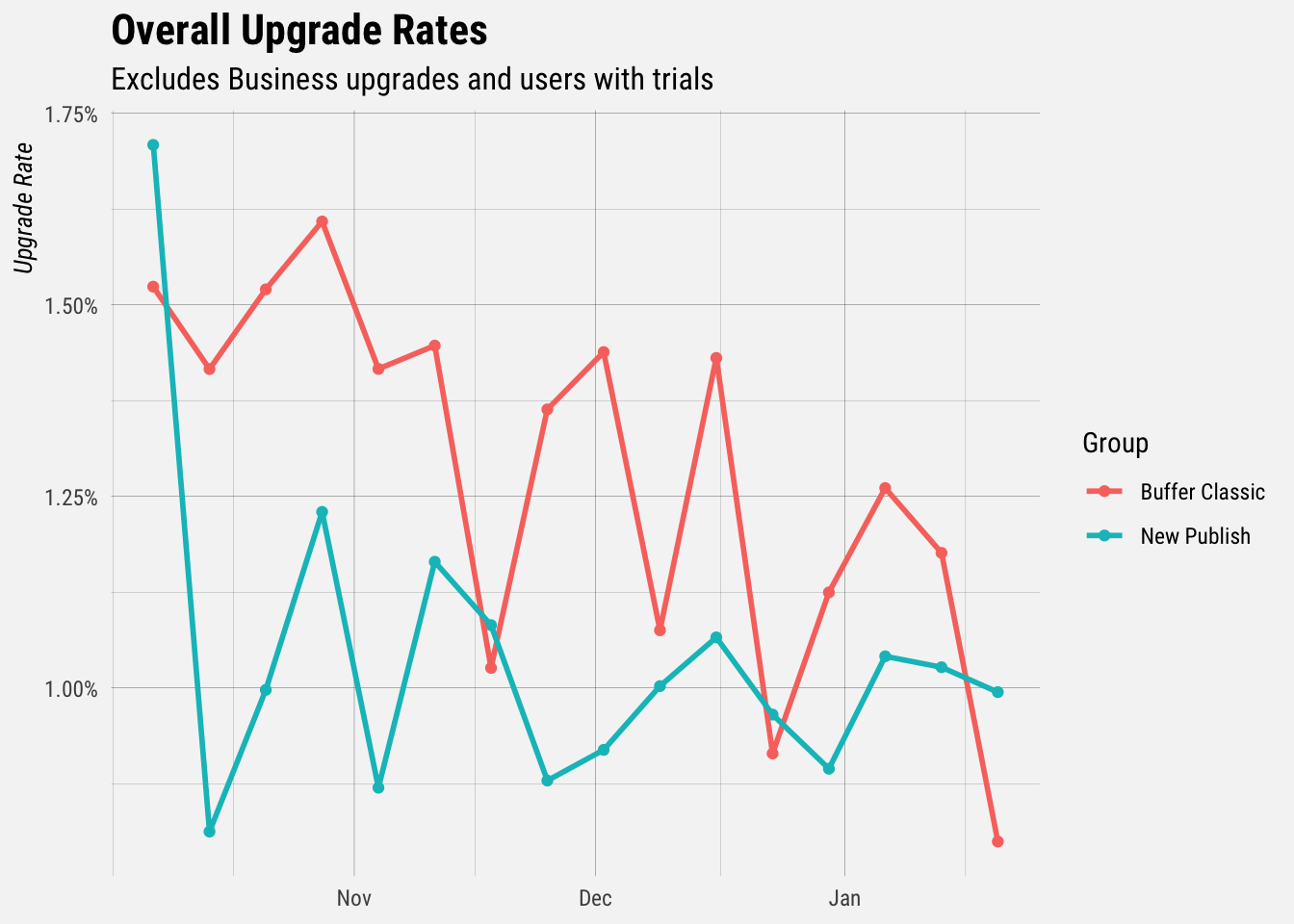

We can see that the plot looks roughly the same. Relatively few users upgrade to Business plans. Another thing to remember is that users that signup with trials are automatically placed in Buffer Classic. What if we removed all users with trials?

## `summarise()` regrouping output by 'signup_week', 'ex_group' (override with `.groups` argument)

We can see that the upgrade rates move closer together. There are some weeks in which the upgrade rate of New Publish is higher than that of Buffer Classic, but, for the most part, Buffer Classic is still higher.

Segmenting by Plan

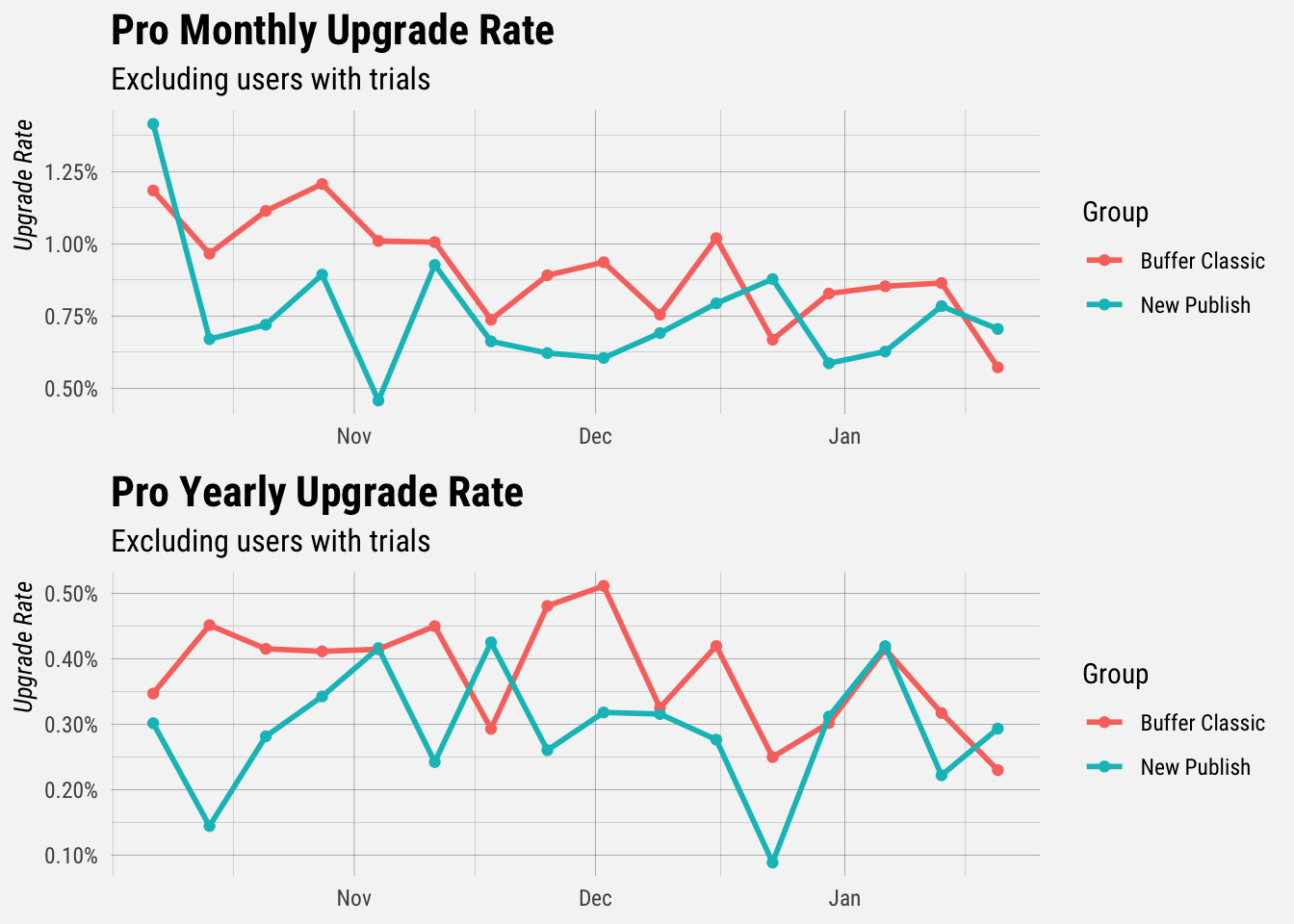

Let’s focus on the two most popular plans, pro_v1_monthly and pro_v1_yearly.

## `summarise()` regrouping output by 'signup_week', 'ex_group' (override with `.groups` argument)

## `summarise()` regrouping output by 'signup_week', 'ex_group' (override with `.groups` argument)

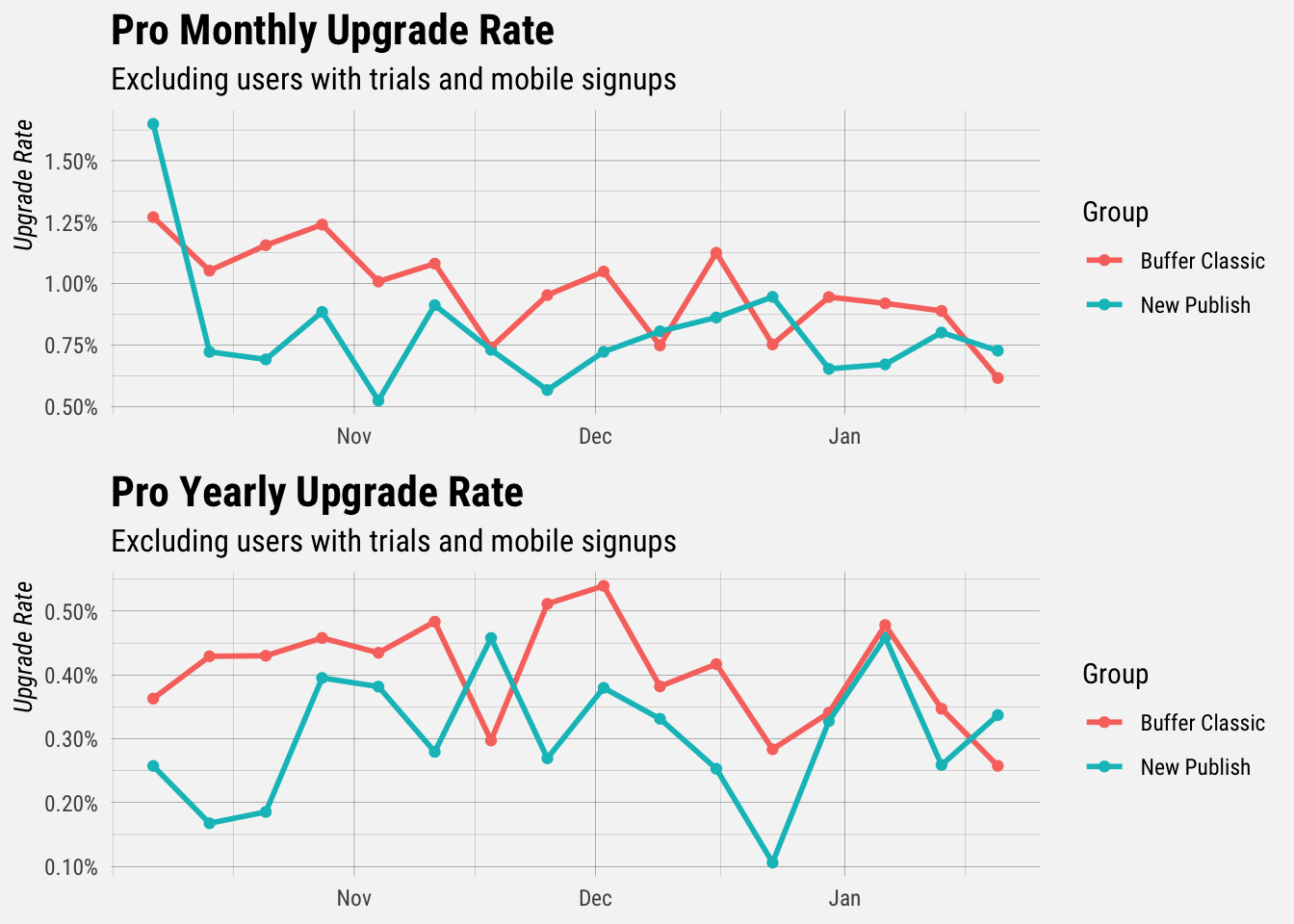

If we isolate these two important plans, we see that the upgrade rates are quite close for users that signed up in recent weeks. The upgrade rates are less close for users that signed up before December.

Web and Mobile Signups

Finally, we want to control for users that sign up through one of the mobile apps. We don’t have a great way of attributing signups to one of the mobile apps, but we are pretty good at attributing web signups. Let’s only include users that signed up through web, and exclude business upgrades and users with trials.

## `summarise()` regrouping output by 'signup_week', 'ex_group' (override with `.groups` argument)

## `summarise()` regrouping output by 'signup_week', 'ex_group' (override with `.groups` argument)

Retention

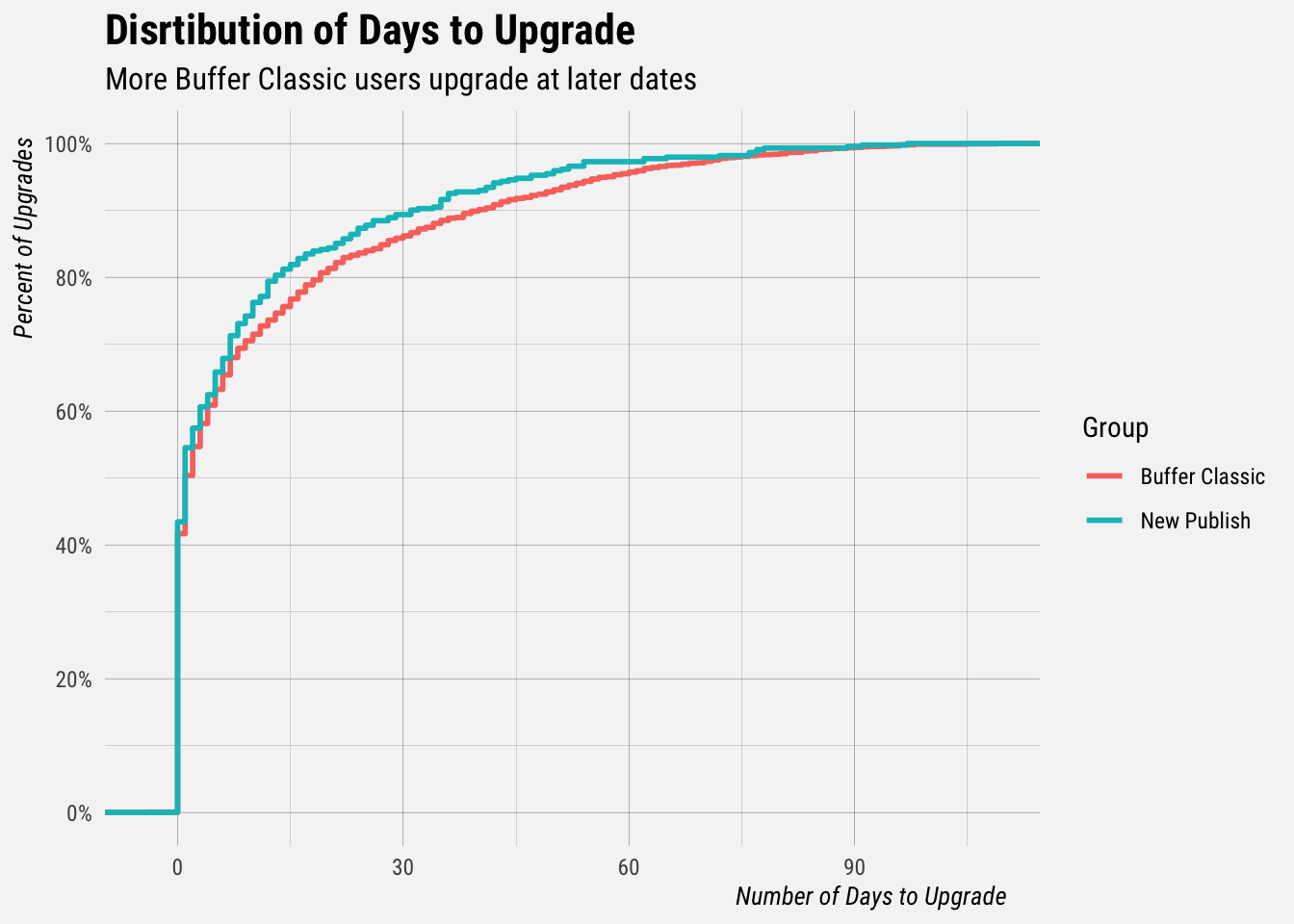

We’ve seen some evidence that New Publish hasn’t quite retained its users at the same rate as Buffer Classic. We also have evidence to suggest that more Buffer Classic users are upgrading at a slightly later date than New Publish users. This could be impacted by the fact that trials are more easily accessed in Buffer Classic.

Let’s look at the distribution of the amount of time it takes users to upgrade in both New Publish and Buffer Classic.

These cumulative distribution functions (CDFs) are not the most straightforward to interpret, but they do show that more users in Buffer Classic upgrade at a later date. For example, approximately 24% of upgrades in Buffer Classic occur after day 15, compared to only 18% of upgrades in New Publish. Approximatesly 14% of upgrades occur after day 30 in Buffer Classic, compared to around 10% of upgrades in New Publish.

Conclusions

Controlling for things like trial starts, mobile signups, and Business upgrades shows us that the upgrade rates of New Publish and Buffer Classic are close, but not quite equal. One difference between the two apps that may be significant is that more Buffer Classic users upgrade at a later date. That may be due to more re-engagement initiatives in Buffer Classic, the availability of trials, or another retention loop that I am not aware of.

I might look towards achieving parity in retention rates in the hope that upgrade rates would follow, but I am not yet sure. Please let me know if you have any thoughts or follow up questions!